Tutorial pyAgrum

In [1]:

from pylab import *

import matplotlib.pyplot as plt

import os

Creating your first Bayesian network with pyAgrum

(This example is based on an OpenBayes [closed] website tutorial)

A Bayesian network (BN) is composed of random variables (nodes) and their conditional dependencies (arcs) which, together, form a directed acyclic graph (DAG). A conditional probability table (CPT) is associated with each node. It contains the conditional probability distribution of the node given its parents in the DAG:

Such a BN allows to manipulate the joint probability \(P(C,S,R,W)\) using this decomposition :

Imagine you want to create your first Bayesian network, say for example the ‘Water Sprinkler’ network. This is an easy example. All the nodes are Boolean (only 2 possible values). You can proceed as follows.

Import the pyAgrum package

In [2]:

import pyAgrum as gum

Create the network topology

Create the BN

The next line creates an empty BN network with a ‘name’ property.

In [3]:

bn=gum.BayesNet('WaterSprinkler')

print(bn)

BN{nodes: 0, arcs: 0, domainSize: 1, dim: 0, mem: 0o}

Create the variables

pyAgrum(aGrUM) provides 4 types of variables :

LabelizedVariable

RangeVariable

IntegerVariable

DiscretizedVariable

In this tutorial, we will use LabelizedVariable, which is a variable whose domain is a finite set of labels. The next line will create a variable named ‘c’, with 2 values and described as ‘cloudy?’, and it will add it to the BN. The value returned is the id of the node in the graphical structure (the DAG). pyAgrum actually distinguishes the random variable (here the labelizedVariable) from its node in the DAG: the latter is identified through a numeric id. Of course, pyAgrum provides functions to get the id of a node given the corresponding variable and conversely.

In [4]:

id_c=bn.add(gum.LabelizedVariable('c','cloudy ?',2))

print(id_c)

0

You can go on adding nodes in the network this way. Let us use python to compact a little bit the code:

In [5]:

id_s, id_r, id_w = [ bn.add(name, 2) for name in "srw" ] #bn.add(name, 2) === bn.add(gum.LabelizedVariable(name, name, 2))

print (id_s,id_r,id_w)

print (bn)

1 2 3

BN{nodes: 4, arcs: 0, domainSize: 16, dim: 4, mem: 64o}

When you add a variable to a graphical model, this variable is “owned” by the model but you still have (read*only) accessors between the 3 related objects : the variable itself, its name and its id in the BN :

In [6]:

print(f"{bn.variable(id_s)=}")

print(f"{bn['s']=}")

print(f"{id_s=}")

print(f"{bn.idFromName('s')=}")

print(f"{bn.variable(id_s).name()=}")

bn.variable(id_s)=(pyAgrum.DiscreteVariable@0x6000012e12c0) s:Range([0,1])

bn['s']=(pyAgrum.DiscreteVariable@0x6000012e12c0) s:Range([0,1])

id_s=1

bn.idFromName('s')=1

bn.variable(id_s).name()='s'

Create the arcs

Now we have to connect nodes, i.e., to add arcs linking the nodes. Remember that c and s are ids for nodes:

In [7]:

bn.addArc("c","s")

Once again, python can help us :

In [8]:

for link in [(id_c,id_r),(id_s,id_w),(id_r,id_w)]:

bn.addArc(*link)

print(bn)

BN{nodes: 4, arcs: 4, domainSize: 16, dim: 9, mem: 144o}

pyAgrum provides tools to display bn in more user-frendly fashions. Notably, pyAgrum.lib is a set of tools written in pyAgrum to help using aGrUM in python. pyAgrum.lib.notebook adds dedicated functions for iPython notebook.

In [9]:

import pyAgrum.lib.notebook as gnb

bn

Out[9]:

Shorcuts with fastBN

The functions fast[model] encode the structure of the graphical model and the type of the variables in a concise language somehow derived from the dot language for graphs (see the doc for the underlying method : fastPrototype).

In [10]:

bn=gum.fastBN("c->r->w<-s<-c")

bn

Out[10]:

Create the probability tables

Once the network topology is constructed, we must initialize the conditional probability tables (CPT) distributions. Each CPT is considered as a Potential object in pyAgrum. There are several ways to fill such an object.

To get the CPT of a variable, use the cpt method of your BayesNet instance with the variable’s id as parameter.

Now we are ready to fill in the parameters of each node in our network. There are several ways to add these parameters.

Low-level way

In [11]:

bn.cpt(id_c).fillWith([0.4,0.6]) # remember : c= 0

Out[11]:

|

|

|

|---|---|

| 0.4000 | 0.6000 |

Most of the methods using a node id will also work with name of the random variable.

In [12]:

bn.cpt("c").fillWith([0.5,0.5])

Out[12]:

|

|

|

|---|---|

| 0.5000 | 0.5000 |

Using the order of variables

In [13]:

bn.cpt("s").names

Out[13]:

('s', 'c')

In [14]:

bn.cpt("s")[:]=[ [0.5,0.5],[0.9,0.1]]

Then \(P(S | C=0)=[0.5,0.5]\) and \(P(S | C=1)=[0.9,0.1]\).

In [15]:

print(bn.cpt("s")[1])

[0.9 0.1]

The same process can be performed in several steps:

In [16]:

bn.cpt("s")[0,:]=0.5 # equivalent to [0.5,0.5]

bn.cpt("s")[1,:]=[0.9,0.1]

In [17]:

print(bn.cpt("w").names)

bn.cpt("w")

('w', 'r', 's')

Out[17]:

|

|

| ||

|---|---|---|---|

|

| 0.3143 | 0.6857 | |

| 0.2140 | 0.7860 | ||

|

| 0.4809 | 0.5191 | |

| 0.1617 | 0.8383 | ||

In [18]:

bn.cpt("w")[0,0,:] = [1, 0] # r=0,s=0

bn.cpt("w")[0,1,:] = [0.1, 0.9] # r=0,s=1

bn.cpt("w")[1,0,:] = [0.1, 0.9] # r=1,s=0

bn.cpt("w")[1,1,:] = [0.01, 0.99] # r=1,s=1

Using a dictionary

This is probably the most convenient way:

In [19]:

bn.cpt("w")[{'r': 0, 's': 0}] = [1, 0]

bn.cpt("w")[{'r': 0, 's': 1}] = [0.1, 0.9]

bn.cpt("w")[{'r': 1, 's': 0}] = [0.1, 0.9]

bn.cpt("w")[{'r': 1, 's': 1}] = [0.01, 0.99]

bn.cpt("w")

Out[19]:

|

|

| ||

|---|---|---|---|

|

| 1.0000 | 0.0000 | |

| 0.1000 | 0.9000 | ||

|

| 0.1000 | 0.9000 | |

| 0.0100 | 0.9900 | ||

Subscripting with dictionaries is a feature borrowed from OpenBayes. It makes it easier to use and avoids common mistakes that happen when introducing data into the wrong places.

In [20]:

bn.cpt("r")[{'c':0}]=[0.8,0.2]

bn.cpt("r")[{'c':1}]=[0.2,0.8]

Input/output

Now our BN is complete. It can be saved in different format :

In [21]:

print(gum.availableBNExts())

bif|dsl|net|bifxml|o3prm|uai|xdsl|pkl

We can save a BN using BIF format

In [22]:

gum.saveBN(bn,"out/WaterSprinkler.bif")

In [23]:

with open("out/WaterSprinkler.bif","r") as out:

print(out.read())

network "unnamedBN" {

// written by aGrUM 1.13.0.9

}

variable c {

type discrete[2] {0, 1};

}

variable r {

type discrete[2] {0, 1};

}

variable w {

type discrete[2] {0, 1};

}

variable s {

type discrete[2] {0, 1};

}

probability (c) {

table 0.5 0.5;

}

probability (r | c) {

(0) 0.8 0.2;

(1) 0.2 0.8;

}

probability (w | r, s) {

(0, 0) 1 0;

(1, 0) 0.1 0.9;

(0, 1) 0.1 0.9;

(1, 1) 0.01 0.99;

}

probability (s | c) {

(0) 0.5 0.5;

(1) 0.9 0.1;

}

In [24]:

bn2=gum.loadBN("out/WaterSprinkler.bif")

We can also save and load it in other formats

In [25]:

gum.saveBN(bn,"out/WaterSprinkler.net")

with open("out/WaterSprinkler.net","r") as out:

print(out.read())

bn3=gum.loadBN("out/WaterSprinkler.net")

net {

name = unnamedBN;

software = "aGrUM 1.13.0.9";

node_size = (50 50);

}

node c {

states = (0 1 );

label = "c";

ID = "c";

}

node r {

states = (0 1 );

label = "r";

ID = "r";

}

node w {

states = (0 1 );

label = "w";

ID = "w";

}

node s {

states = (0 1 );

label = "s";

ID = "s";

}

potential (c) {

data = ( 0.5 0.5);

}

potential ( r | c ) {

data =

(( 0.8 0.2) % c=0

( 0.2 0.8)); % c=1

}

potential ( w | r s ) {

data =

((( 1 0) % s=0 r=0

( 0.1 0.9)) % s=1 r=0

(( 0.1 0.9) % s=0 r=1

( 0.01 0.99))); % s=1 r=1

}

potential ( s | c ) {

data =

(( 0.5 0.5) % c=0

( 0.9 0.1)); % c=1

}

Inference in Bayesian networks

We have to choose an inference engine to perform calculations for us. Many inference engines are currently available in pyAgrum:

Exact inference, particularly :

gum.LazyPropagation: an exact inference method that transforms the Bayesian network into a hypergraph called a join tree or a junction tree. This tree is constructed in order to optimize inference computations.others:

gum.VariableElimination,gum.ShaferShenoy, …

Samplig Inference : approximate inference engine using sampling algorithms to generate a sequence of samples from the joint probability distribution (

gum.GibbSSampling, etc.)Loopy Belief Propagation : approximate inference engine using inference algorithm exact for trees but not for DAG

In [26]:

ie=gum.LazyPropagation(bn)

Inference without evidence

In [27]:

ie.makeInference()

print (ie.posterior("w"))

w |

0 |1 |

---------|---------|

0.3529 | 0.6471 |

In [28]:

from IPython.core.display import HTML

HTML(f"In our BN, $P(W)=${ie.posterior('w')[:]}")

Out[28]:

With notebooks, it can be viewed as an HTML table

In [29]:

ie.posterior("w")[:]

Out[29]:

array([0.3529, 0.6471])

Inference with evidence

Suppose now that you know that the sprinkler is on and that it is not cloudy, and you wonder what Is the probability of the grass being wet, i.e., you are interested in distribution \(P(W|S=1,C=0)\). The new knowledge you have (sprinkler is on and it is not cloudy) is called evidence. Evidence is entered using a dictionary. When you know precisely the value taken by a random variable, the evidence is called a hard evidence. This is the case, for instance, when I know for sure that the sprinkler is on. In this case, the knowledge is entered in the dictionary as ‘variable name’:label

In [30]:

ie.setEvidence({'s':0, 'c': 0})

ie.makeInference()

ie.posterior("w")

Out[30]:

|

|

|

|---|---|

| 0.8200 | 0.1800 |

When you have incomplete knowledge about the value of a random variable, this is called a soft evidence. In this case, this evidence is entered as the belief you have over the possible values that the random variable can take, in other words, as P(evidence|true value of the variable). Imagine for instance that you think that if the sprinkler is off, you have only 50% chances of knowing it, but if it is on, you are sure to know it. Then, your belief about the state of the sprinkler is [0.5, 1] and you should enter this knowledge as shown below. Of course, hard evidence are special cases of soft evidence in which the beliefs over all the values of the random variable but one are equal to 0.

In [31]:

ie.setEvidence({'s': [0.5, 1], 'c': [1, 0]})

ie.makeInference()

ie.posterior("w") # using gnb's feature

Out[31]:

|

|

|

|---|---|

| 0.3280 | 0.6720 |

the pyAgrum.lib.notebook utility proposes certain functions to graphically show distributions.

In [32]:

gnb.showProba(ie.posterior("w"))

In [33]:

gnb.showPosterior(bn,{'s':1,'c':0},'w')

inference in the whole Bayes net

In [34]:

gnb.showInference(bn,evs={})

inference with hard evidence

In [35]:

gnb.showInference(bn,evs={'s':1,'c':0})

inference with soft and hard evidence

In [36]:

gnb.showInference(bn,evs={'s':1,'c':[0.3,0.9]})

inference with partial targets

In [37]:

gnb.showInference(bn,evs={'c':[0.3,0.9]},targets={'c','w'})

Testing independence in Bayesian networks

One of the strength of the Bayesian networks is to form a model that allows to read qualitative knwoledge directly from the grap : the conditional independence. aGrUM/pyAgrum comes with a set of tools to query this qualitative knowledge.

In [38]:

# fast create a BN (random paramaters are chosen for the CPTs)

bn=gum.fastBN("A->B<-C->D->E<-F<-A;C->G<-H<-I->J")

bn

Out[38]:

Conditional Independence

Directly

First, one can directly test independence

In [39]:

def testIndep(bn,x,y,knowing):

res="" if bn.isIndependent(x,y,knowing) else " NOT"

giv="." if len(knowing)==0 else f" given {knowing}."

print(f"{x} and {y} are{res} independent{giv}")

testIndep(bn,"A","C",[])

testIndep(bn,"A","C",["E"])

print()

testIndep(bn,"E","C",[])

testIndep(bn,"E","C",["D"])

print()

testIndep(bn,"A","I",[])

testIndep(bn,"A","I",["E"])

testIndep(bn,"A","I",["G"])

testIndep(bn,"A","I",["E","G"])

A and C are independent.

A and C are NOT independent given ['E'].

E and C are NOT independent.

E and C are independent given ['D'].

A and I are independent.

A and I are independent given ['E'].

A and I are independent given ['G'].

A and I are NOT independent given ['E', 'G'].

Markov Blanket

Second, one can investigate the Markov Blanket of a node. The Markov blanket of a node \(X\) is the set of nodes \(M\!B(X)\) such that \(X\) is independent from the rest of the nodes given \(M\!B(X)\).

In [40]:

print(gum.MarkovBlanket(bn,"C").toDot())

gum.MarkovBlanket(bn,"C")

digraph "no_name" {

node [shape = ellipse];

0[label="A"];

1[label="B"];

2[label="C", color=red];

3[label="D"];

6[label="G"];

7[label="H"];

0 -> 1;

2 -> 3;

2 -> 1;

2 -> 6;

7 -> 6;

}

Out[40]:

In [41]:

gum.MarkovBlanket(bn,"J")

Out[41]:

Minimal conditioning set and evidence Impact using probabilistic inference

For a variable and a list of variables, one can find the sublist that effectively impacts the variable if the list of variables was observed.

In [42]:

[bn.variable(i).name() for i in bn.minimalCondSet("B",["A","H","J"])]

Out[42]:

['A']

In [43]:

[bn.variable(i).name() for i in bn.minimalCondSet("B",["A","G","H","J"])]

Out[43]:

['A', 'G', 'H']

This can be also viewed when using gum.LazyPropagation.evidenceImpact(target,evidence) which computes \(P(target|evidence)\) but reduces as much as possible the set of needed evidence for the result :

In [44]:

ie=gum.LazyPropagation(bn)

ie.evidenceImpact("B",["A","C","H","G"]) # H,G will be removed w.r.t the minimalCondSet above

Out[44]:

|

|

| ||

|---|---|---|---|

|

| 0.2175 | 0.7825 | |

| 0.5821 | 0.4179 | ||

|

| 0.1301 | 0.8699 | |

| 0.0343 | 0.9657 | ||

In [45]:

ie.evidenceImpact("B",["A","G","H","J"]) # "J" is not necessary to compute the impact of the evidence

Out[45]:

|

|

| |||

|---|---|---|---|---|

|

|

| 0.1632 | 0.8368 | |

| 0.1715 | 0.8285 | |||

|

| 0.1718 | 0.8282 | ||

| 0.1636 | 0.8364 | |||

|

|

| 0.2416 | 0.7584 | |

| 0.2936 | 0.7064 | |||

|

| 0.2954 | 0.7046 | ||

| 0.2446 | 0.7554 | |||

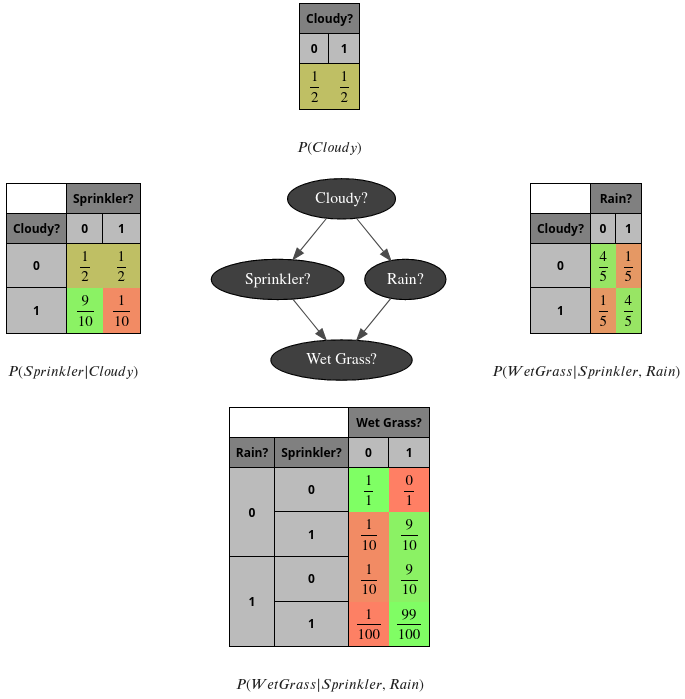

PS- the complete code to create the first image

In [46]:

bn=gum.fastBN("Cloudy?->Sprinkler?->Wet Grass?<-Rain?<-Cloudy?")

bn.cpt("Cloudy?").fillWith([0.5,0.5])

bn.cpt("Sprinkler?")[:]=[[0.5,0.5],

[0.9,0.1]]

bn.cpt("Rain?")[{'Cloudy?':0}]=[0.8,0.2]

bn.cpt("Rain?")[{'Cloudy?':1}]=[0.2,0.8]

bn.cpt("Wet Grass?")[{'Rain?': 0, 'Sprinkler?': 0}] = [1, 0]

bn.cpt("Wet Grass?")[{'Rain?': 0, 'Sprinkler?': 1}] = [0.1, 0.9]

bn.cpt("Wet Grass?")[{'Rain?': 1, 'Sprinkler?': 0}] = [0.1, 0.9]

bn.cpt("Wet Grass?")[{'Rain?': 1, 'Sprinkler?': 1}] = [0.01, 0.99]

# the next line control the number of visible digits

gum.config['notebook','potential_visible_digits']=2

gnb.sideBySide(bn.cpt("Cloudy?"),captions=['$P(Cloudy)$'])

gnb.sideBySide(bn.cpt("Sprinkler?"),gnb.getBN(bn,size="3!"),bn.cpt("Rain?"),

captions=['$P(Sprinkler|Cloudy)$',"",'$P(WetGrass|Sprinkler,Rain)$'])

gnb.sideBySide(bn.cpt("Wet Grass?"),captions=['$P(WetGrass|Sprinkler,Rain)$'])

PS2- a second glimpse of gum.config

(for more, see the notebook : config for pyAgrum)

In [47]:

bn=gum.fastBN("Cloudy?->Sprinkler?->Wet Grass?<-Rain?<-Cloudy?")

bn.cpt("Cloudy?").fillWith([0.5,0.5])

bn.cpt("Sprinkler?")[:]=[[0.5,0.5],

[0.9,0.1]]

bn.cpt("Rain?")[{'Cloudy?':0}]=[0.8,0.2]

bn.cpt("Rain?")[{'Cloudy?':1}]=[0.2,0.8]

bn.cpt("Wet Grass?")[{'Rain?': 0, 'Sprinkler?': 0}] = [1, 0]

bn.cpt("Wet Grass?")[{'Rain?': 0, 'Sprinkler?': 1}] = [0.1, 0.9]

bn.cpt("Wet Grass?")[{'Rain?': 1, 'Sprinkler?': 0}] = [0.1, 0.9]

bn.cpt("Wet Grass?")[{'Rain?': 1, 'Sprinkler?': 1}] = [0.01, 0.99]

# the next lines control the visualisation of proba as fraction

gum.config['notebook','potential_with_fraction']=True

gum.config['notebook', 'potential_fraction_with_latex']=True

gum.config['notebook', 'potential_fraction_limit']=100

gnb.sideBySide(bn.cpt("Cloudy?"),captions=['$P(Cloudy)$'])

gnb.sideBySide(bn.cpt("Sprinkler?"),gnb.getBN(bn,size="3!"),bn.cpt("Rain?"),

captions=['$P(Sprinkler|Cloudy)$',"",'$P(WetGrass|Sprinkler,Rain)$'])

gnb.sideBySide(bn.cpt("Wet Grass?"),captions=['$P(WetGrass|Sprinkler,Rain)$'])